Blog Analytics

As mentioned in this earlier post Modernise infrastructure of this blog , I wanted to transform the blog access log on CloudFront distribution level to a more modernised solution too. The goal is to enable fast query, low cost and visual presentation. Initially I wanted to do S3 Log Delivery in Parquet format + Glue Data Catalog + Athena + QuickSight. That experiment ended up with a totally opposite outcome, and I completely overturned the solution. Eventually I stayed with Datadog for this moment.

Here is what happened:

- Parquet format with partitioning does not seem to be as fast as I thought. This is because I misunderstood the use case of Parquet format. It is more useful for large files. For my blog, there is very little traffic, so each access log file is small, but the number of such small files are huge. According to some Reddit discussion , this is exactly an anti-pattern for Parquet file because it introduces more overheads and benefits. In my situation, I feel the query with Parquet format is unbearably slow. Like each query took at least 30 seconds to complete, but at the beginning, I thought I didn’t set things up correctly;

- The cost of this solution isn’t actually low. I didn’t calculate exactly how much it is supposed to spend, so maybe I didn’t do things correctly. The bill on that day when I did the experiment suddenly surged over $10, which shows up as a 2000% more than usual spend. That’s a very entertaining scene and I immediately knew something wasn’t right;

- QuickSight is not free, and there is no PAYG model. QuickSight requires subscription, and the cheapest subscription is $24 per user/month . I assumed AWS services are all charged in the same model, and I only came to know this when I have finished all previous Athena setup. This price became the final verdict that this solution is not for me. Even though I wanted to practice AWS so that I know better at work if I ever need to design a similar solution for my client, I am not willing to pay this amount of money just for my hobby;

- Switching to Datadog is much easier than the Athena+QuickSight solution. All I need to do is to set up Datadog AWS integration, which can be easily done by applying a CloudFormation stack written by Datadog. Then I just need to set up an event trigger for the log forwarder Lambda function from the S3 bucket of the log delivery. I do need to change the format back to W3C so that the log remains plaintext so they can be parsed by Datadog.

When I did those experiment, I have also replaced the old S3 (Legacy) log delivery type with the new S3 log delivery type. I don’t know how the legacy S3 log delivery works, but the new S3 log delivery type is backed by CloudWatch Logs. In terms of IaC, there are two noticeable learnings:

- The underlying CloudWatch Logs delivery resources must be created in us-east-1 region because CloudFront is a global service, but the destination S3 bucket can be in any region. In case of region difference, cross region data transfer fee will incur (

USE1-DataTransfer-Out-Bytes); - When using Terraform to manage the CloudFront access log delivery, there is no parameter to control the partitioning (Terraform documentation: aws_cloudwatch_log_delivery_destination V6.34.0 ); however, in case of no value, the partitioning is automatic. I spent a lot of timing trying to hack for enabling partitioning. I failed, but surprisingly found that I didn’t even need to do any hack.

With the current solution, I can use Datadog free plan, which gives me 1 day of data retention. This solution costs me around $0.15 per day on CloudWatch service, which adds up to around $5 per month. This makes me feel like I want to go back to S3 (Legacy) mode because that directly writes logs to S3 bucket without CW Logs. The reason I chose the newer v2 solution is to be future proof, but the newer version is more costly and I don’t really need its real-time delivery and streaming capability, so I think it makes sense to turn the log delivery mode back. Perhaps I am going to do it soon.

On another note, I am also checking if there is better alternative than Datadog for hobbyist project. I have looked at some candidates. Later when I am free, I might give them a try.

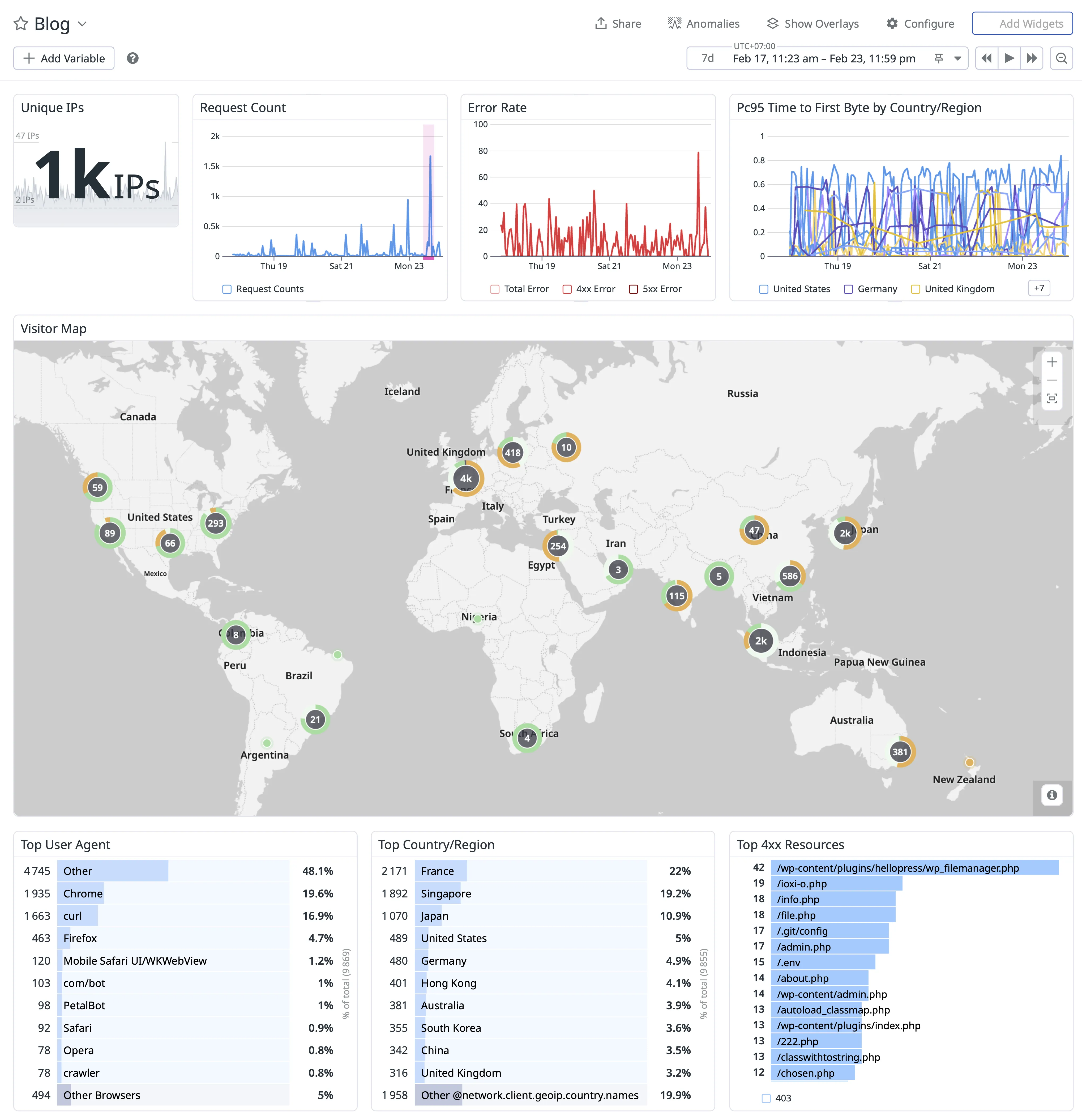

For now, here is a recent analytic for my blog. This is during my Datadog trial period, so I have the data of a full week.

The dashboard suggests a few things:

- The latency across the globe is very low (less than 1 second);

- All errors are 4xx error (which is expected because there is no backend for this blog);

- Most access are from crawlers/bots. Certain requests are from security research or vulnerability scanning (such as

/.env,/.git/config);

Moving on, with Datadog free plan, I will only keep those data on Datadog for 1 day, which can limit my ability to catch up the latest updates. However, this POC has been proven successful. There are room for improvement for cost saving and better Datadog alternative. I will explore that again when I am free.